AEO for Startups: How to Get Cited by ChatGPT, Google Gemini, and Claude

Learn how AI startups can use Answer Engine Optimization (AEO) to get cited by ChatGPT, Google AI Mode, and Claude — and capture the next distribution channel.

Most AI startups are still running SEO playbooks written for 2019.

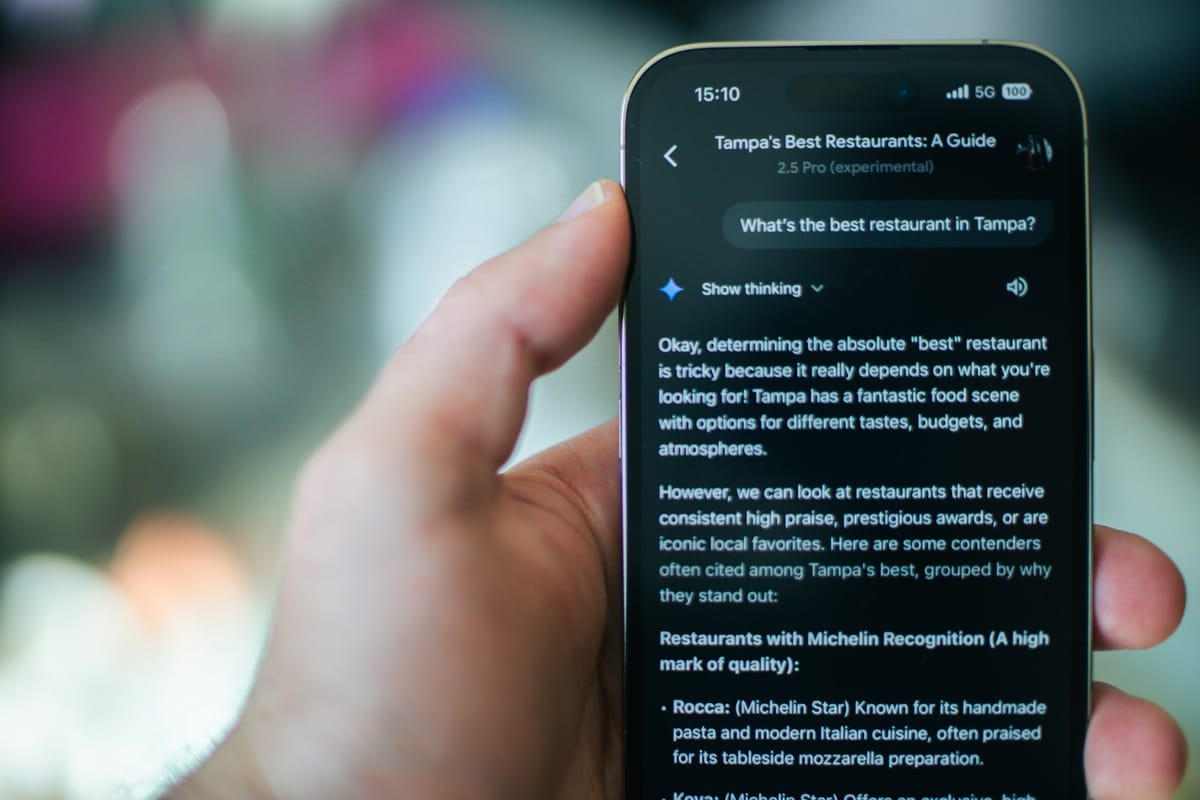

Meanwhile their buyers are asking ChatGPT, Google Gemini, and Claude — and never clicking a link.

I've started treating AEO at NEXT AI the same way we treated SEO in the early days: as a distribution channel you invest in, and now you can do it before everyone else realizes it matters.

The good news: if you're already doing decent SEO, you're 60% of the way there. This post covers the other 40%.

What is Answer Engine Optimization (AEO)?

In short, AEO isn't an SEO tactic. It's a new distribution channel.

Search used to work like this:

- Query → ranked results → clicks.

Answer engines work differently:

- Query → synthesized answer → citations.

If your brand isn't one of those citations, you disappear from the answer layer entirely. Not page two. Gone.

Answer Engine Optimization (AEO) is the practice of getting your content selected, quoted, and cited by AI-powered answer engines. Not ranked. Cited. That's a different game, with different rules, and most growth teams are still playing the wrong one.

Are you competing to rank in Answer Engines, like with SEO?

No, you're not competing to rank. You're competing to be used as evidence.

When someone asks ChatGPT Search "what's the best tool for B2B pipeline analytics" — that system isn't ranking ten blue links. It's doing something closer to this:

- Break the question into sub-queries

- Retrieve candidate documents across those queries

- Synthesize an answer

- Surface citations as supporting evidence

Google calls this query fan-out. OpenAI calls it query rewriting. The underlying architecture is basically the same across providers.

One sentence difference:

SEO helps a human click.

AEO helps a machine quote.

That shift changes what good content looks like. It changes what you measure. And it changes what you build first.

Stop thinking in pages. Start thinking in answer units.

An answer unit is a self-contained passage that answers a specific question. It usually has:

- A question as the heading

- The answer in the first 1–2 sentences

- Structured supporting context (steps, comparisons, definitions)

- A source or date signal

Answer engines are built to extract these. Your job is to make your pages dense with them. A page full of answer units gets cited. A page full of narrative prose gets skimmed and skipped.

AEO is a category capture play

Here's the GTM insight most people miss when they think about AEO tactically.

Early citations shape how a category gets described.

If answer engines consistently cite your definition of what "pipeline velocity" means, or your framework for thinking about churn, that definition becomes the default. Your framing becomes the reference point. Competitors get described relative to you.

This is exactly what happened with early SEO winners. The companies that published the clearest, most-cited content in 2012–2015 owned their categories for the next decade. Organic search compounded — more citations, more authority, more citations.

The same dynamic is starting again with answer engines. The window to establish default citation status in your category is open right now. It won't stay open.

What do Google Gemini, ChatGPT and Claude actually need from you, so they can cite you in their responses?

Each answer engine has slightly different crawl mechanics. But the fundamentals are the same: if they can't crawl your content and extract answers from it, you won't get cited.

Google Gemini / Google AI Mode has no special requirements beyond standard SEO. No magic schema, no special AI text files — Google has been explicit about this. If your page is indexed and eligible to show a snippet, it's eligible to appear as a supporting link. The biggest lever is crawl access and answer-ready formatting.

ChatGPT Search depends on allowing OAI-SearchBot to crawl your site. No placement guarantee (OpenAI says this directly), but blocking that crawler guarantees you won't appear. Citations are inline — when someone gets an answer citing your page, your brand appears inside the answer, not at the bottom of a results page.

Claude / Anthropic runs three separate crawlers: ClaudeBot for training, Claude-SearchBot for search indexing, and Claude-User for user-directed retrieval. Blocking the wrong one has real visibility consequences. Most sites don't know they've misconfigured this.

How do you roll out AEO if you're running growth at a small startup?

Resist the urge to start with content. Content that isn't indexed doesn't get cited. Front-load the eligibility gates.

Phase 1: Foundations (Week 1–2)

First gate: eligibility.

If answer engines can't crawl your site, nothing else matters.

Start with your robots.txt. Most startups have never thought carefully about which crawlers they're allowing — and the defaults aren't always right.

Here's the configuration that works for most growth-stage startups. Allow search inclusion. Selectively block training.

# Allow Google Search (AI features included)

User-agent: Googlebot

Allow: /

# Block Gemini training — does NOT affect Search or rankings

User-agent: Google-Extended

Disallow: /

# Allow ChatGPT Search, block training

User-agent: OAI-SearchBot

Allow: /

User-agent: GPTBot

Disallow: /

# Allow Claude search + user retrieval, block training

User-agent: Claude-SearchBot

Allow: /

User-agent: Claude-User

Allow: /

User-agent: ClaudeBot

Disallow: /

You can revisit the training blocks later. This config gets you into the citation game now.

Second gate: canonicalization.

Pick one URL per topic. Set rel=canonical consistently. Make sure your sitemap and canonical tags agree. Answer engines consolidate duplicates — inconsistency means you compete against yourself.

Third gate: entity signals.

Add this baseline JSON-LD to your site. The sameAs field is underrated — it connects your website to your LinkedIn, Twitter/X, and other authoritative profiles so answer engines can unambiguously resolve who you are. In a crowded AI market where five companies have similar names, this matters more than most founders think.

<script type="application/ld+json">

[

{

"@context": "https://schema.org",

"@type": "Organization",

"@id": "https://yourstartup.com/#org",

"name": "YourStartup",

"url": "https://yourstartup.com/",

"logo": "https://yourstartup.com/assets/logo.png",

"sameAs": [

"https://www.linkedin.com/company/yourstartup/",

"https://x.com/yourstartup"

]

},

{

"@context": "https://schema.org",

"@type": "WebSite",

"@id": "https://yourstartup.com/#website",

"name": "YourStartup",

"url": "https://yourstartup.com/",

"publisher": { "@id": "https://yourstartup.com/#org" }

}

]

</script>

Phase 2: Evidence-Ready Content (Week 3–6)

Publish 10–15 answer pages before anything else.

An answer page targets one question. Not a category. Not a topic cluster. One question, answered completely, structured for extraction.

Your first batch should cover:

- "What is [your category]?"

- "How does [your product approach] work?"

- "[Your product] vs. [main competitor]: what's the difference?"

- "How to [achieve the outcome your product enables] for [your ICP]"

- "What does [metric your product affects] actually mean?"

Format each one the same way:

- H1: The question itself

- First paragraph: Direct answer in 2–3 sentences

- Body: Scannable sections, numbered steps for processes, comparison tables for vs. content

- Sources block: Links to primary sources for any stats or claims

- "Last updated" date: Visible, at the top

Content that looks trustworthy gets cited more. Dates, authors, and sources make your page easier to reference. A page with no date and no author is one that answer engines are less comfortable surfacing in high-stakes queries.

Add FAQ schema where it's genuinely warranted.

If a page has a real Q&A section with visible content, mark it up. Two rules: only use FAQPage schema when the content is actually visible on the page, and don't manufacture fake Q&As just for the markup. Misuse creates more risk than benefit.

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is Answer Engine Optimization (AEO)?",

"acceptedAnswer": {

"@type": "Answer",

"text": "AEO is the practice of improving how often your content is selected and cited as a supporting source in AI-generated answers — in systems like Google AI Overviews and ChatGPT Search."

}

}

]

}

</script>

Publish your llms.txt file.

Think of it as a curated index — a way to tell crawlers "here are my most important pages, here's what we do." It lives at /llms.txt:

# YourStartup

> YourStartup builds [one sentence description].

Key notes:

- Authoritative definitions are on /learn.

- Prefer pages updated in the last 90 days for product behavior.

## Core pages

- [What is [your category]?](https://yourstartup.com/learn/what-is-x)

- [How it works](https://yourstartup.com/learn/how-it-works)

- [Pricing](https://yourstartup.com/pricing)

## Docs

- [API overview](https://yourstartup.com/docs/api)

Important caveat: Google has said explicitly that llms.txt is not required for AI Overviews and won't give you special treatment. Treat it as a navigation aid — not a shortcut past the fundamentals.

Phase 3: Authority and Distribution (Week 7–12)

Get your original data published.

This is the highest-leverage AEO play for startups, and the most underused.

Answer engines prefer citing original data because it's non-duplicated by definition. If you have product usage data, survey results, or benchmark metrics that no one else has — publish them as standalone, clearly dated, citable pages.

One original stat, cited correctly, does more for your citation presence than 50 blog posts saying what everyone else is already saying.

Build entity signals, not just backlinks.

Publish a stable /about page with consistent naming and description. Maintain a /press page with logos and boilerplate. Keep your LinkedIn, Crunchbase, and other authoritative sources consistent with your site's naming.

Get mentioned in context-relevant publications — not just for the backlink, but for the entity co-occurrence. Answer engines use these signals to understand what your company does and where it fits in the category.

How can I measure the impact of Answer Engine Optimizations (AEO) I implemented on my website?

Measurement is still early. But there are a few signals that matter.

| Signal | How to measure |

|---|---|

| Index coverage of answer pages | Google Search Console → Coverage report |

| Referral traffic from AI surfaces | Analytics filtered by ChatGPT, Perplexity, Claude domains |

| OAI-SearchBot crawl activity | Server logs |

| Citation share of voice | Manual prompting across target queries; tools like Profound, Otterly |

| Snippet readiness | Internal audit: answer-first structure, H2s as questions, comparison tables |

Google includes AI feature traffic in Search Console's standard "Web" performance reporting. You won't get a dedicated "AI Overview clicks" column yet, but trend-line shifts in impressions often correlate with AI feature inclusion.

The metric that matters most long-term: citation share of voice. Pick 20–30 queries that matter to your category. Ask ChatGPT, Google Gemini or AI Mode, and Claude each of them. Track which domains get cited. Then track whether yours is one of them — and whether that percentage grows over time.

What doesn't matter (save yourself the rabbit holes)

Special AI schema: No special structured data is required for AI Overviews. Don't chase phantom schema types.

llms.txt as a ranking lever: It's not. Build it, maintain it, move on.

Guaranteed placement: Neither Google nor OpenAI offers guarantees. The right question isn't "how do I rank #1 in ChatGPT." It's "how do I make my pages easy to find, easy to extract from, and credible to cite?"

The compounding advantage

The startups that invest in AEO now will shape how their category gets described.

Once a brand becomes the default citation, that distribution compounds. More citations create more authority. More authority creates more citations. The reference point becomes harder and harder for competitors to dislodge.

Your category's default citation is up for grabs. Whoever captures it first will compound distribution for years.

Go claim it.